In general, Panorama Server doesn’t have anything to bog down. With one exception I’ll discuss below, it does not run any background tasks, and except for searching for records, it doesn’t have any loops. It does search the database every time you lock or unlock a record, but this is a super fast search based on the record ID number. The record ID number is built into each record, so performance of this search won’t depend on how many fields are in the record. However, it will take longer to find a record at the end of the database rather than at the beginning. But since you say you are always dealing with the same record, this should be a constant speed. Also, it would take a really huge database to get up to the kind of delay you are talking about, probably at least millions of records.

So let’s discuss the one background task the server does run - auto-save. Panorama is of course RAM based, but the data eventually needs to be saved permanently to disk. Since saving to disk is relatively slow compared to in RAM operations, it doesn’t do this every time a change is made. Instead, it sets a delay to save later. If you make a bunch of changes during the period of this delay, this can save a lot of time. On the other hand, if Panorama crashed for some reason during this delay (for example a power failure), the changes wouldn’t be saved (though they are also saved in the journal for redundancy). So you may want the delay longer or shorter, and you can change this delay in the Settings.

So maybe the delays you are seeing are caused when the auto-save happens? That still seems like a very long delay to save a 100Mb database, but maybe? A good test might be to set the timeout to zero. This will cause the auto-save to happen immediately. If this is the cause of the delays, then you might see the delay every time you run through the loop.

Note - you won’t see any auto-save delay unless you perform another operation immediately. When the client makes a request, the server doesn’t wait for the save to complete before returning the results of the request to the client. Instead, the save happens after the request is finished. So you’ll only notice the auto-save delay if you turn around and immediately make another request. If the server is in the middle of performing an auto-save, the next request will be delayed until the auto-save is finished.

If auto-save is the source of these delays, you will be able to significantly mitigate the delays by increasing the auto-save time. The default is 1 second, but it can be increased to anything you want, even minutes.

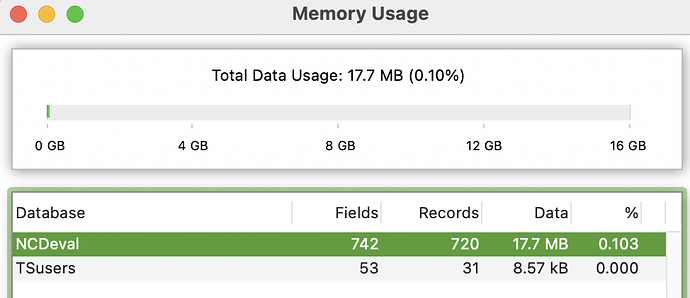

The Finder shows the NCDeval file at 94.6 MB and TSusers at 67.6 MB.

I imagine you are mentioning this because the memory usage window shows much lower values of 17.7MB and 8.57kB. This is because the memory usage window ONLY shows the amount of memory used by the DATA. The rest of the size you see in the Finder is the space used by forms and procedures. So your databases are mostly forms and procedures, especially the TSusers file which appears to be less than 1% data. Procedures are small, so maybe you have tens of megabytes of forms? I would think the only way to do that would be to have tens of megabytes of static images in the forms.

If auto-save is the source of these delays, that would seem to indicate that it is taking more than 10 seconds to save these files. Does that sound plausible to you? How long does it take if you just press Command-S? I don’t have any 100Mb files handy at the moment, but I just tried a 12Mb file and it saves in about a tenth of a second. But I don’t have any databases with tens of megabytes of forms in them. Maybe that is slower to save?

Another possibility I wonder about is if somehow the file system is on the computer is falling asleep, and has to be woken up to save? I don’t think that’s a thing, especially on SSD based systems, which you are probably using. But maybe?

If the problem is that you have forms with tens of megabytes of static images in the forms, maybe that’s why this issue hasn’t been reported by anyone else. In that case, you might get a big performance booth by switching to Image Display objects, with the images in a separate folder instead of built into the forms themselves. Of course that would create an additional issue in that you would have to somehow distribute the images to all of the clients, right now Panorama is taking care of that for you. But possibly at a cost of these performance issues.

This is all just an educated guess. The problem may have absolutely nothing to do with this at all. But I guess it does give you something to research further.